What I have noticed is that the DHCP IP of WAN is 192.168.132.152 and not 192.168.132.2 or 3 or 4 etc, I have tested this twice I saw that the dynamically assigned static route IP is 192.168.132.2 (my laptop vmnet8 IP is 192.168.132.1), now I'm just not understanding who is assigning this IP to the static route, if its the VMware DHCP then how or to what is it getting assigned to or is it just a thing between VMWare and Fortigate. What I tried was let the DHCP assign the WAN port IP and also let the static route get the IP dynamically (this was an option in the static route only showing when I allow DHCP IP assignment to WAN port 1.

#Fortigate vm routing manual#

What happened was I was setting manual IP addresses of both the WAN port 1 and the static route gateway as IP address of laptop vmnet8 which is 192.168.132.1. This is like a hairpin, but without the NAT.Sorry to bring this back again, but it has started working.

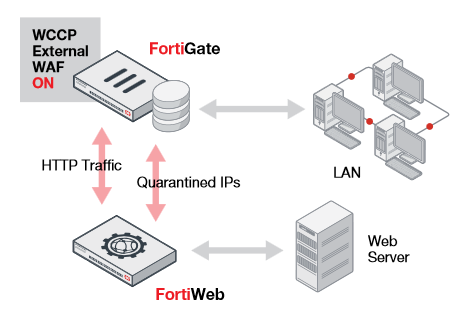

SO, I am fairly sure that the firewall is preventing this traffic but I'm not sure why. I see the data get to the firewall but it does not then leave the firewall (when not using NAT). I have done packet captures on all points. The hosts just respond to that IP and it works. When I turn on NAT on the policies, the FortiGate is doing source NAT to the IP address of the interface. It is a bit different but makes sense in the context of Azure. The details of why this happens in all based on the architecture of Azure. It is kinda like a router on a stick but without the VLANs. AND all communication in both directions will use the single interface. SO, from the FortiGate's perspective, all of these other networks are reachable via the same interface. There are multiple VNets 'peered' back to the VNet where the FortiGate is placed. Each subnet (and each host) has a default route pointing to the transit interface IP of the FortiGate. This is in Azure and is running a multi-subscription model. Here's a bit more context, I didn't want to bring it up since it might muddy the waters a bit. Also, I did find this posting, but I must be missing something Virtual Fortinet with VLAN Tagging I am using a Fortinet VM on an ESXi host. I also tried to set the allowed-traffic-redirect option to enabled and it didn't seem to help First off, I am not a network/Fortinet guy so forgive my lack of knowledge in this area. I cannot use VLANs to separate the traffic and I cannot utilize additional interfaces. I don't think this could trigger RPF rules since the routes are there (flow logs do not show any issues that I can see) I can only imagine it has something to do with the interface being the same ingress and egress. I have all of the static routes in place and have no issues again with FortiGate to subnet traffic, it's just when the traffic traverses the fortigate between the subnets.

This works fine for ping but will break directed IP traffic. The only way I can get this communication to work is to enable NAT on the policy. The policies are in place to allow traffic and I do see inbound traffic hitting the counters. What I cannot get to work is connectivity between subnets VIA the fortigate. All subnets can ping to the interface of the the transit interface. Behind the transit interface is multiple subnets.